GDP.pdf: Can $100B AI Models Master the Documents that Run the World?

Introducing our new expert multimodal reasoning benchmark.

One of our expert Surgers – an ER physician who helps us teach AI how to triage medical cases – recently asked a frontier model to help determine the correct treatment for a patient with an acute pulmonary embolism. This is the kind of use case that comes up every day in medical departments across the world, and it required understanding a PDF covering treatment guidelines in 2026.

Every single frontier model failed.

We want models that can act as enterprise agents. To do that, they need to master the documents that run the world.

The Unglamorous Lifeblood of the Economy

Parsing PDFs isn't the sexiest area of AI research. It doesn't produce viral videos, code flashy apps, or generate splashy headlines. But PDFs are the unglamorous lifeblood of the global economy – capturing every medical record, earnings report, contract, and invoice.

They’re also the lifeblood of AI agents. If we expect autonomous agents to genuinely transform day-to-day work, they have to natively master these formats: reading them, organizing them, cross-referencing dense data, and accurately filling them out.

When models fail at this level, the consequences are serious:

- Finance — A model transposes two numbers from a quarterly earnings table, and a fabricated margin profile circulates in a buy-side memo.

- Legal — A model hallucinates the location of a liability cap in a commercial lease, leading to catastrophic legal advice.

- Healthcare — A model pulls the wrong row from a drug interaction chart, creating a life-threatening patient safety hazard.

These all happened in our testing.

Measuring the Essential: GDP.pdf

To measure the unsexy-but-essential work that keeps the economy moving, we built GDP.pdf, the public set of which we're releasing today on Huggingface here.

GDP.pdf is an expert multimodal and reasoning benchmark. It consists of 100 real-world prompts and PDFs pulled directly from professional workflows across ten domains: Finance, Healthcare, Legal, STEM/Research, Engineering, Construction, Manufacturing/Supply Chain, Insurance, Real Estate, and HR.

Every task required parsing, understanding, and synthesizing complex PDFs – interpreting a multi-page dosage table, isolating an indemnification clause buried in nested exhibits, reconciling revenue figures across quarterly filings.

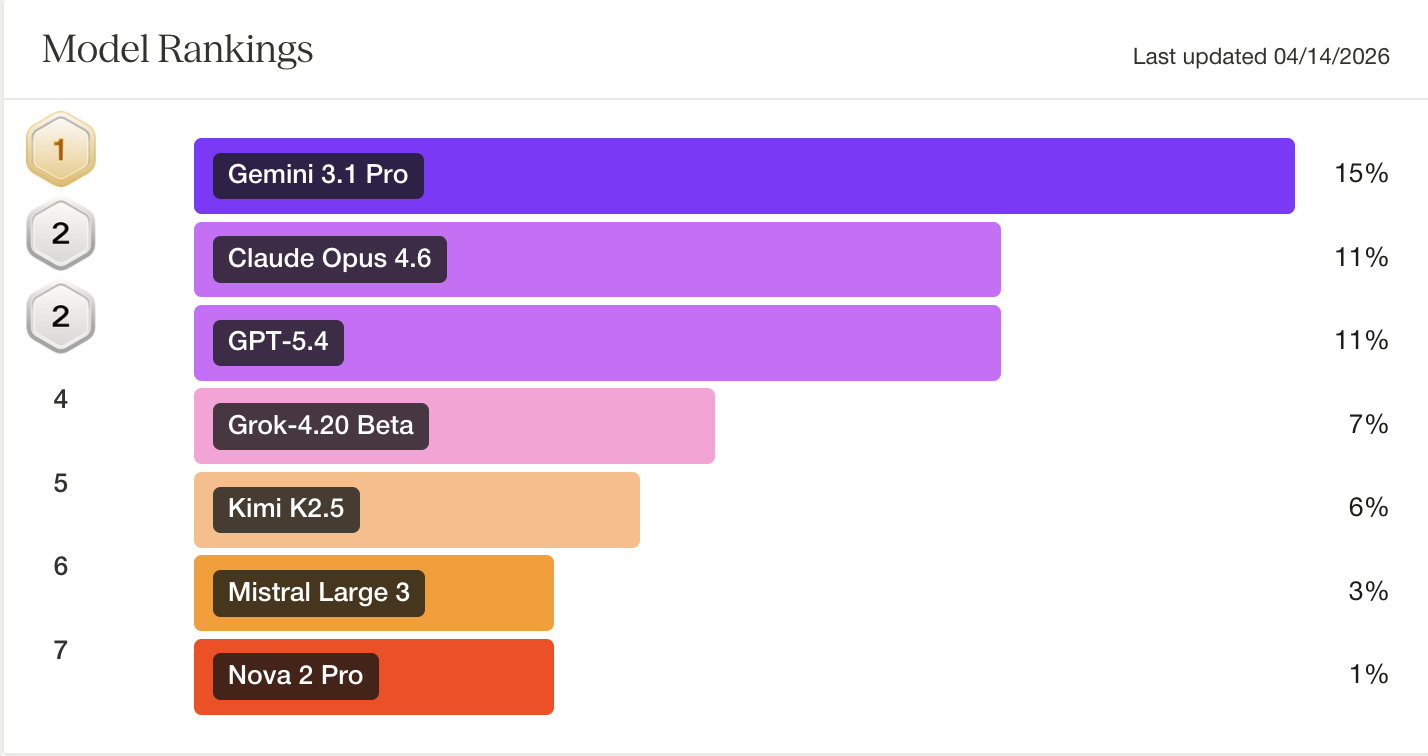

The result: Every frontier model scored under 15%.

Into the Real World

At Surge, we often build benchmarks for the ceiling. Hemingway-bench measures their progress toward the Booker Prize. CoreCraft measures their ability to run a chaotic startup. Riemann-bench tests whether models can solve moonshot mathematics. We care about what's possible at the frontier.

We built GDP.pdf because real-world economic utility matters just as much. A model that can theorize about the Riemann hypothesis but gets lost in the fine print of a commercial lease is simply an intelligent liability.

Before we trust AI agents to manage the high-stakes workflows that drive the economy, they need to be able to master the complex paperwork that sustains it.

View the full GDP.pdf benchmark results and failure examples here. The public set can be found on Huggingface here.